Open Science

As a research group, we firmly believe that science is best when it is open. Whenever our research questions require new tools, we make an effort to develop these tools such that they can also be used by others. This includes sharing code, technical drawings, print files, documentation and much more – have a look at some of our projects below, and get in touch if you want to contribute, or have any questions about using these tools in your own work!

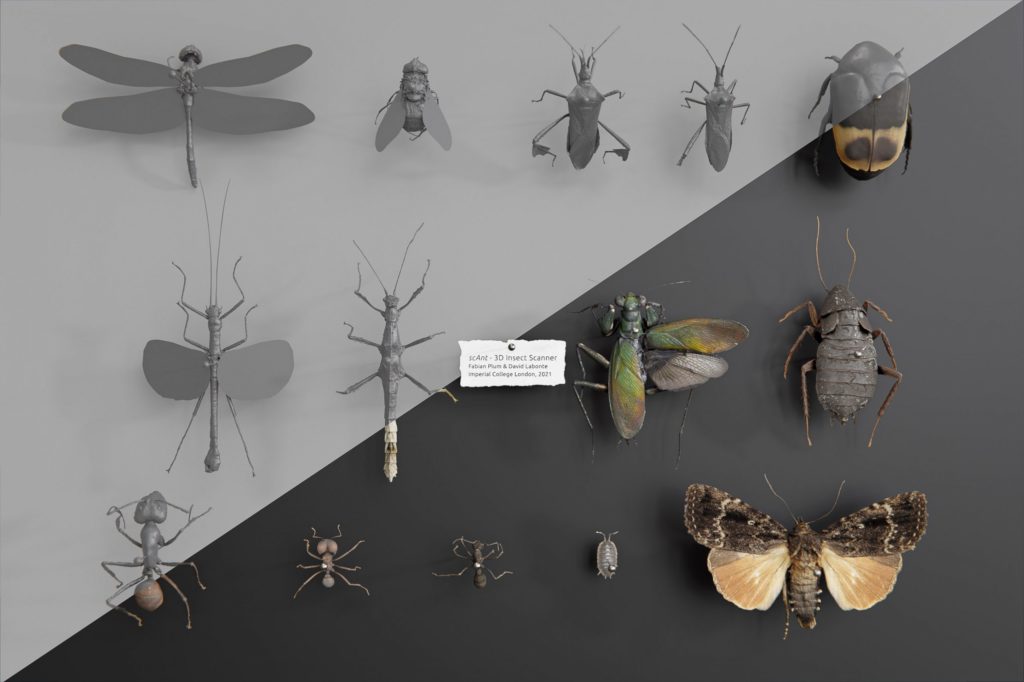

scAnt

scAnt is an open-source platform for the creation of digital 3D models of arthropods (and other small objects). It consists of a scanner and a Graphical User Interface (GUI), and enables the automated generation of multi-view Extended Depth Of Field (EDOF) images. These images are then masked with a novel automatic routine which combines random forest-based edge-detection, adaptive thresholding, and connected component labelling. The masked images can then be processed further with a photogrammetry software package of choice, including open-source options such as Meshroom, to create high-quality, textured 3D models. As a result of the exclusive reliance on generic hardware components, rapid prototyping, and open-source software, scAnt costs only a fraction of available comparable systems. The resulting accessibility of scAnt will (i) drive the development of novel and powerful methods for machine learning-driven behavioural studies, leveraging synthetic data; (ii) increase accuracy in comparative morphometric studies as well as extend the available parameter space with area and volume measurements; (iii) inspire novel forms of outreach; and (iv) aid in the digitisation efforts currently underway in several major natural history collections.

scAnt across the globe – if yours is missing, let us know!

Build your own!

replicAnt

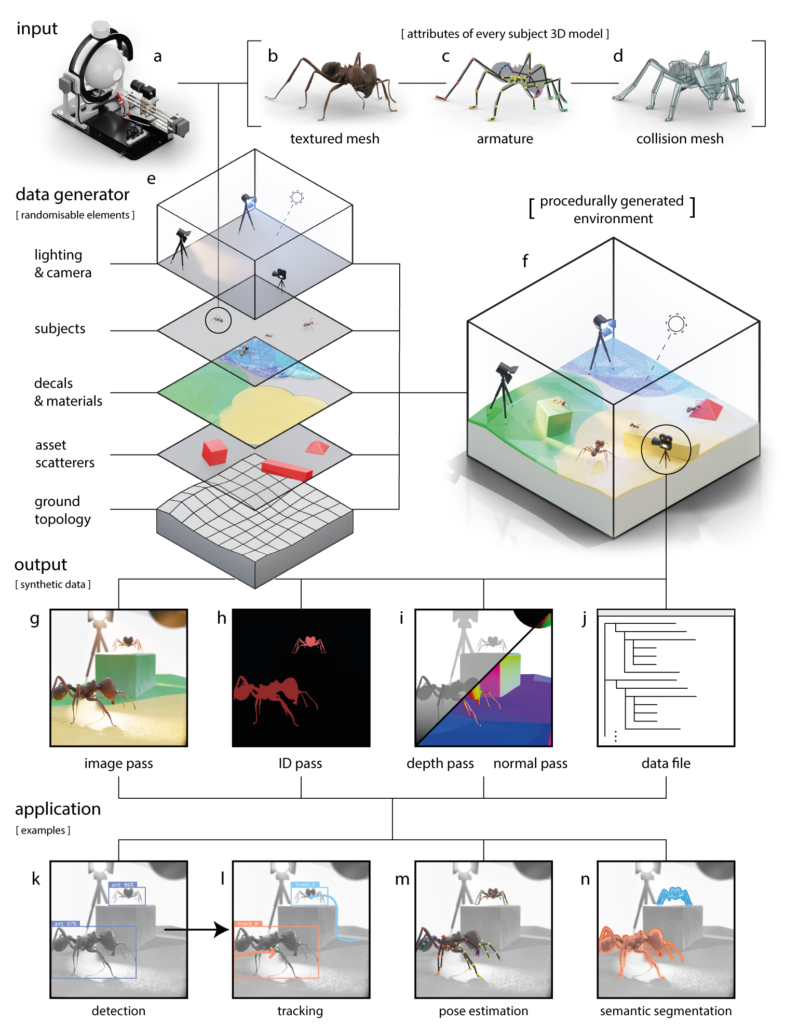

Deep learning-based computer vision methods are transforming animal behavioural research. Transfer learning has enabled work in non-model species, but still requires hand-annotation of example footage, and is only performant in well-defined conditions. To help overcome these limitations, we developed replicAnt, a configurable pipeline implemented in Unreal Engine 5 and Python, designed to generate large and variable training datasets on consumer-grade hardware. replicAnt places 3D animal models into complex, procedurally generated environments, from which automatically annotated images can be exported. We demonstrate that synthetic data generated with replicAnt can significantly reduce the hand-annotation required to achieve benchmark performance in common applications such as animal detection, tracking, pose-estimation, and semantic segmentation. We also show that it increases the subject-specificity and domain-invariance of the trained networks, thereby conferring robustness. In some applications, replicAnt may even remove the need for hand-annotation altogether. It thus represents a significant step towards porting deep learning-based computer vision tools to the field.

Start generating today!

OmniTrax

OmniTrax is a deep learning-driven multi-animal tracking and pose-estimation Blender Add-on. OmniTrax provides an intuitive high-throughput tracking solution for large groups of freely moving subjects by leveraging recent advancements in deep-learning based detection and computationally inexpensive buffer-and-recover tracking approaches. Combining automated tracking with the Blender-internal motion tracking pipeline allows to streamline the annotation and analysis process of large video files with hundreds of freely moving individuals. Additionally, OmniTrax integrates DeepLabCut-Live to enable running markerless poseestimation on arbitrary numbers of animals. We leverage the existing DeepLabCut Model Zoo as well as custom-trained detector and pose-estimator networks to facilitate large-scale behavioural studies of social animals.